30.10.2025, Online event with UiA students

Vilde Elvemo & Ingebjørg gregersen

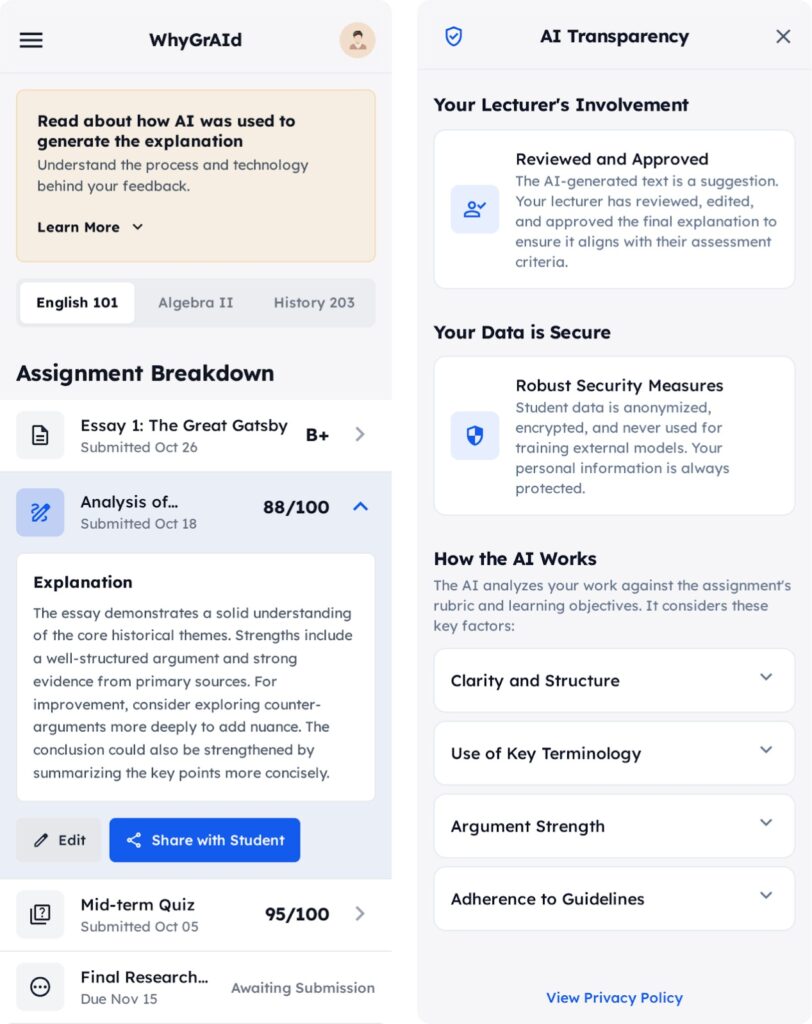

At the end of October, we carried out a design challenge in which students explored how AI can be applied in a transparent and responsible way when creating exam explanations.

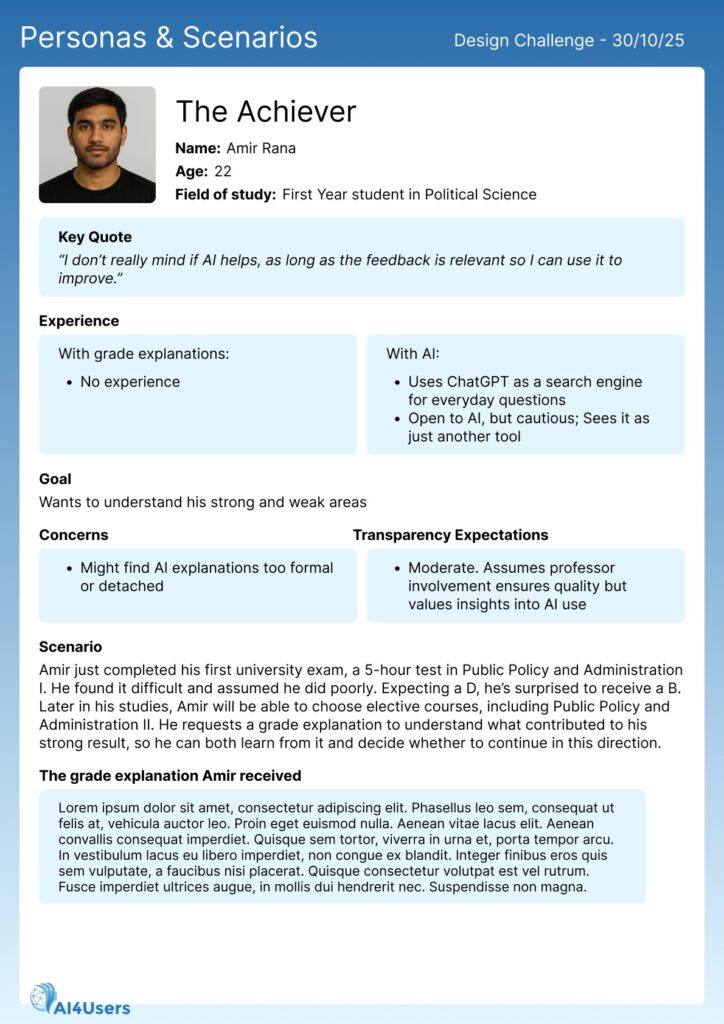

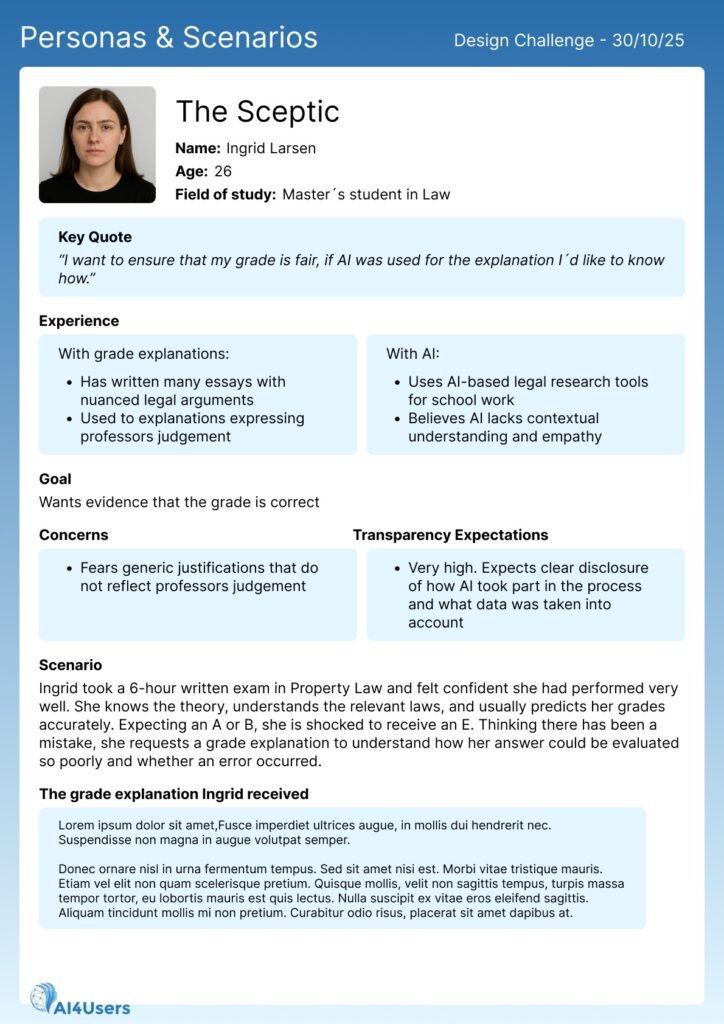

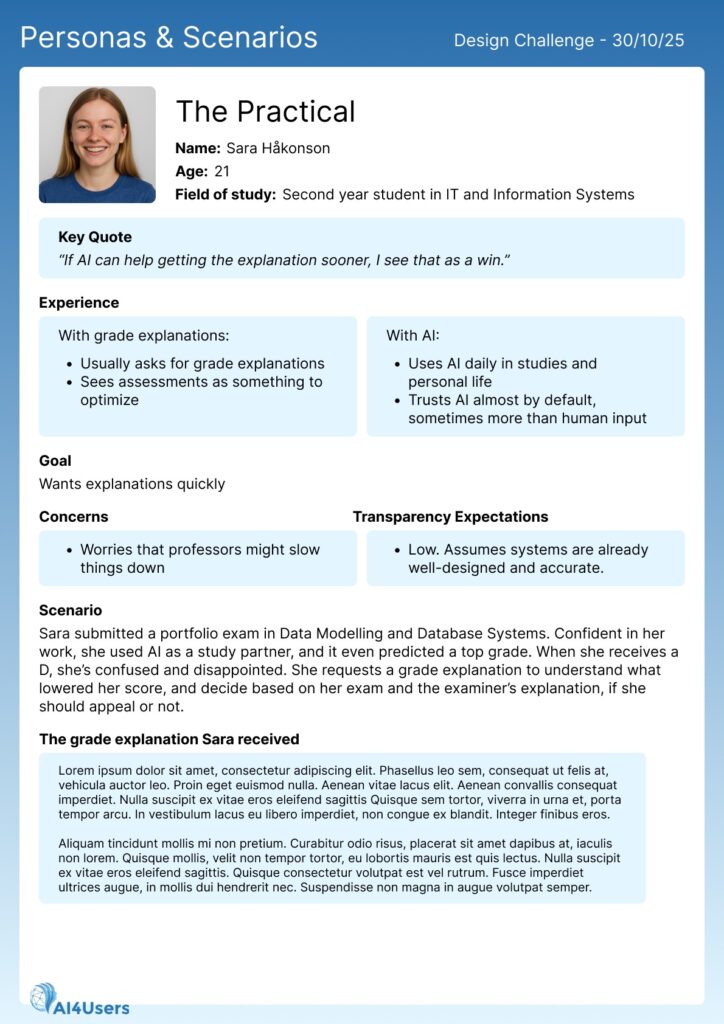

The participants were divided into groups and tasked to design how exam explanations crafted with support from an AI tool could be presented to students. As part of the challenge, they were given a concrete use case, along with personas and scenarios (See Read more), to guide their design work and ensure a shared understanding of the context and user needs.

After a working session, each group were asked to showcase both their design solutions and their reflections on what should and should not be included, as well as their own views on the use of AI in this context.

This activity offered valuable insight into students’ expectations, preferences, and ideas for how AI support can be used transparently in ways that feel both trustworthy and meaningful to them.

AI-generated illustration (Chat-GPT)

Read more

Background

According to the Act relating to Universities and University Colleges (§11-8), students in Norway have the right to request an explanation for their exam grade. When a student requests an explanation, the lecturer must provide it within two weeks.

Lecturers report that this process is time-consuming and that there is a need for more efficient solutions that still preserve the learning value of the explanation. To support this, universities both in and outside of Norway are exploring the use of AI as an assistive tool for drafting exam explanations.

If the universities decide to implement such a tool, several important questions arise, one of them being how these AI-assisted explanations should be presented to students. Since students are required to be transparent about their own use of AI in deliverables, the universities feel an equal obligation to be transparent in return.

However, students have different levels of understanding and expectations regarding AI. Some are comfortable with technical details and prefer in-depth explanations; others find such information confusing or unnecessary and prefer simple, human-like explanations. Some want full transparency; others only want the key takeaway.

To investigate how these challenges could be addressed and what kinds of presentation formats students themselves consider trustworthy, understandable, and useful, we carried out an online design challenge with students from the UiA course IS-117-1 “Introduction to Human-Centered Artificial Intelligence”.

Use case

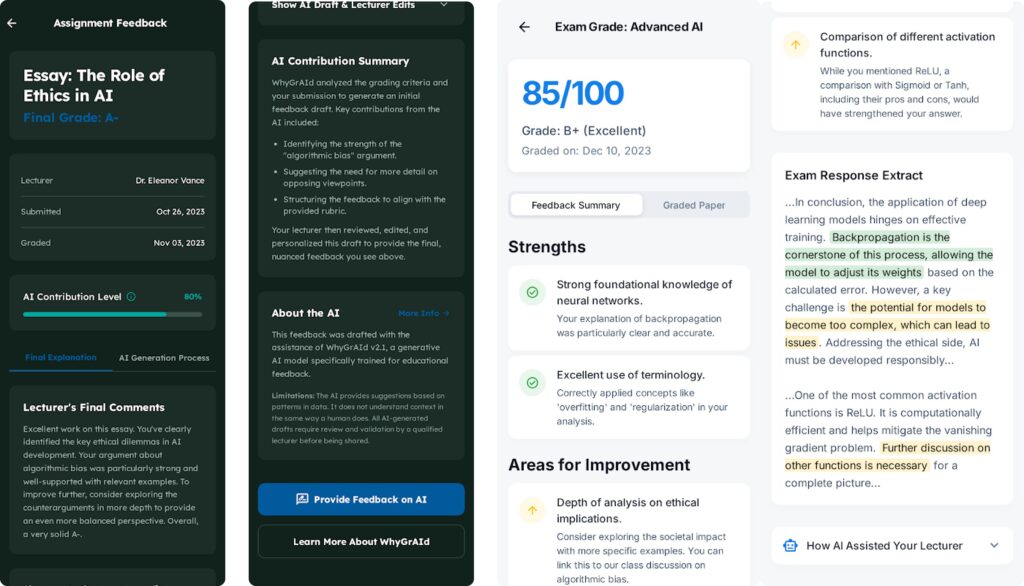

The AI tool is named WhyGrAId and can be used by lecturers to get support when they need to provide an explanation after evaluating and grading the exam. To get support they upload:

- The exam question

- The grading guide/model answer

- The student’s answer

- The grade given

- Extra instructions to the AI or comments about the assessment, if any

WhyGrAId generates draft text that the lecturer must review and edit before making the final explanation available to the student.